When the Cloud Reminded Me Who's Really in Control

Last Tuesday I was at the agency finishing the homepage of a website to show to a client for review. I hit send on the email with the link at precisely 14:55 local time (13:55 UTC). Twenty five minutes later the reply came in: "I can't access it, I refreshed it several times and it just shows some error."

I tried to open Webflow and the designer wouldn't load. Opened the same link I had sent to the client and nothing, just a 500 error. I search on X anything related to Webflow and I wasn't the only one with problems. Hosted sites that had not been cached returned 500 errors. For some people, the CMS was completely broken, with items gone and components refusing to appear. I kept refreshing Webflow's status page and scrolling X to try to figure out what was happening, so I could tell the client that he could finally review the structure and design of his new website.

Webflow posted regular updates on X for more than three hours. The full explanation came in detail the next day.

Basically, one of their CMS database clusters hit a hidden capacity limit from their cloud provider. The monitoring dashboard showed only a tiny fraction of storage used. Behind the scenes, the engine had been quietly reserving space for millions of database files until the entire allocation filled up. No data disappeared, but the service stayed disrupted for most of the afternoon and into the next morning for some.

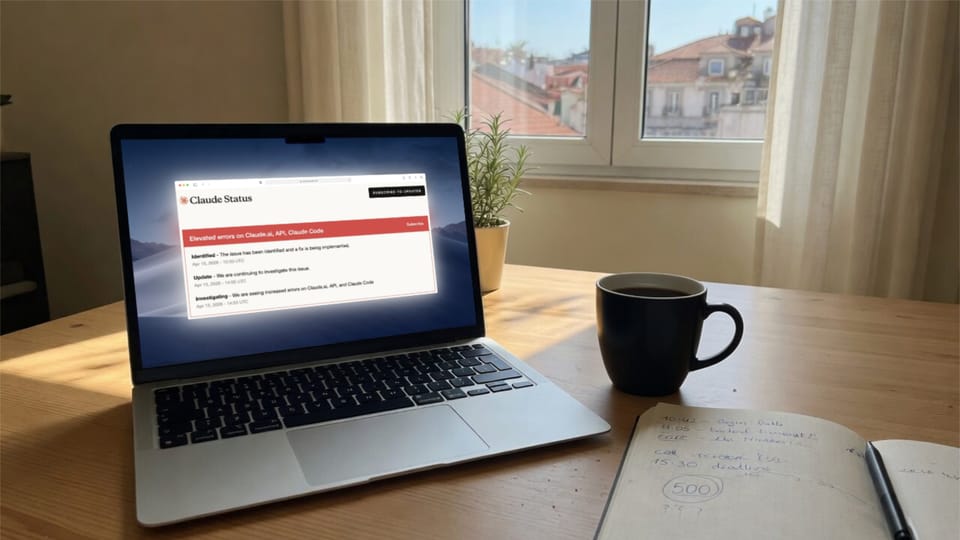

The following day, also in the afternoon, I was using Claude to help me improve and update another client's website SEO. All of a sudden every prompt returned an error.

The status page confirmed widespread issues across Claude, the API, and Claude Code. Downdetector was filling up with reports, and the whole thing lasted a couple of hours and cleared by early evening.

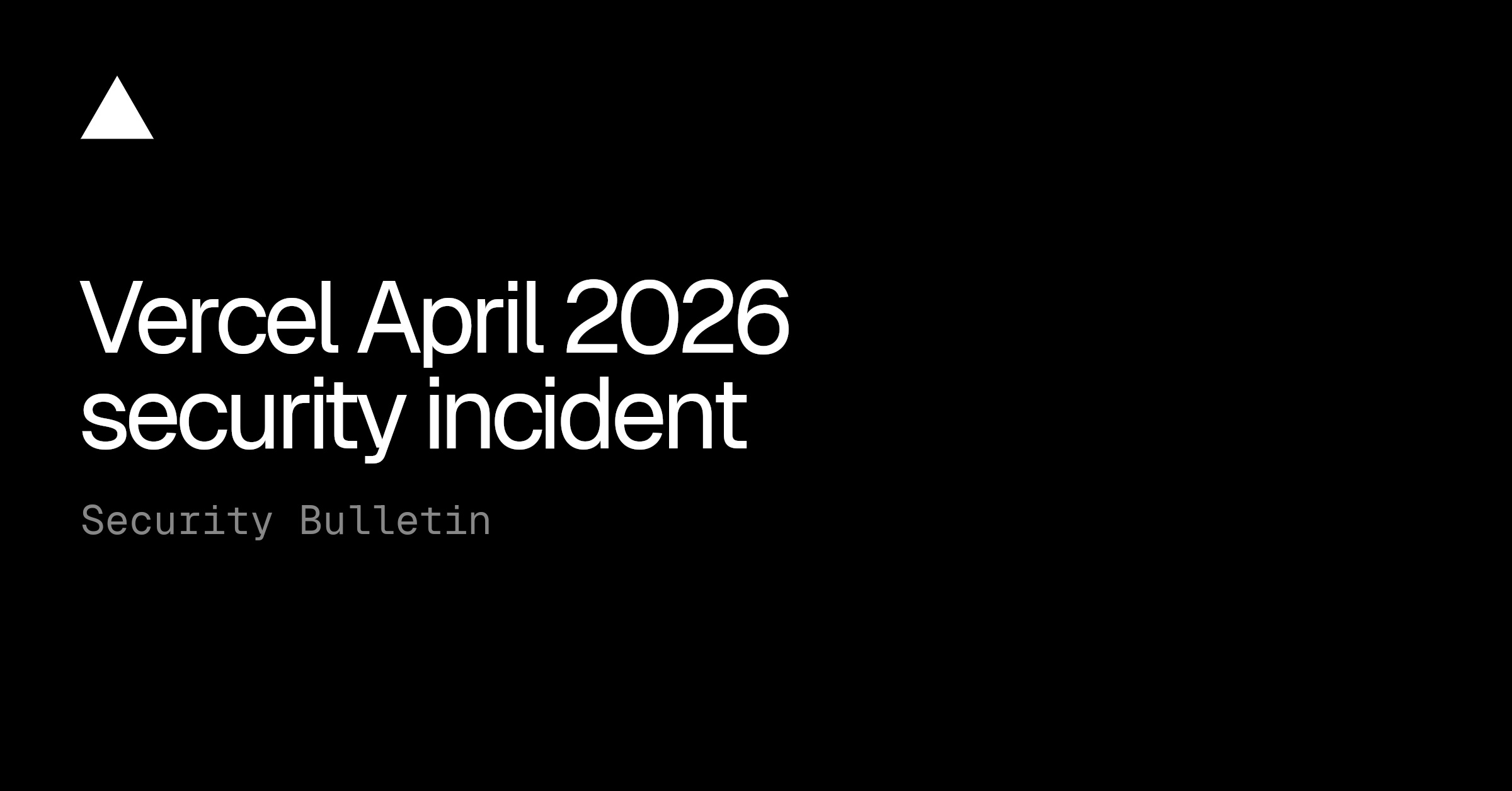

A few days later, it was Vercel.

They had discovered unauthorized access to some internal systems through a third-party AI tool one of their employees used. A limited number of customers had credentials exposed, so they recommended rotating environment variables (especially any not marked sensitive) and checking activity logs.

My small experiment project on Vercel showed nothing unusual on the activity logs. No credentials compromised, no strange logs. Still, the notice landed like another quiet reminder.

All three companies stayed transparent about the incidents. Webflow published a detailed incident report, Anthropic updated their status page in real time, and Vercel engaged outside experts and kept people informed. I respect and value that, it beats silence. When you have millions of customers, relying on your services or platforms, that's the least you can do.

Yet, transparency does not give back the afternoon I spent waiting for a client to see and review the site. It does not bring back the time lost while Claude "recovered", that should have been used to finish some work. And it does not erase the extra caution I now apply after reading the Vercel bulletin and receiving their email.

I keep using these tools because they deliver real speed and optimize my workflow considerably. Webflow lets me prototype fast and share live previews clients can actually interact in real time. Claude sharpens my writing and research in ways that move work forward by several hours. Vercel deploys side experiments without any friction. To me, it's absolute convenience.

But the trade-off becomes obvious when something breaks at the worst possible time. The work already lives on their servers, inside their editor, or behind their API. When the service stops for some reason, I have no quick way to continue exactly where I left off. Most times I just wait or try to do some other work that needs to be done. I explain to the client and hope that he can understand the situation, but what really breaks me is that I end up losing the rhythm of the day.

This is not a complaint against any company. Outages and security incidents happen. What stayed with me from last week was how fast the dependency becomes visible the moment some platform goes dark.

I still use these services most days, because they really improve my workflow at the agency. But these back-to-back disruptions made me pause and look more carefully at where my own work actually lives. I always keep local exports of important client's assets. That has always been mandatory at the agency, no important or sensitive data can be "held hostage" on some cloud service. But after all this I started to slowly separate small pieces of my workflow that do not require a live connection.

The cloud gives velocity and convenience when everything runs smoothly. When it doesn't, you feel exactly how much of your day depends on it staying online.

I'm not planning to abandon cloud-based services or anything like that, I'll keep using the ones I really need, and where they make sense. But I've started paying closer attention to what I leave on someone else's servers, and to the small habits that make the next quiet day hurt a little less.

May The Code Be With You! 🚀

Member discussion