The Glass Wall

Anthropic built a model so capable at breaking software that they decided not to release it.

That's the sentence from April 7th that caught my attention. Not because it's surprising; with the way things were going, it was clear that this would happen eventually. But it's the first time (that we know of) that a major lab looked at what it built and said, "This one stays behind the rope."

The model is Claude Mythos Preview. The initiative is called Project Glasswing. The public didn't learn about either from a press release, they learned from a misconfigured database.

How it started

On March 26th, a routine error in Anthropic's content management system exposed nearly 3,000 internal files, including details about a model described internally as "by far the most powerful AI" the company had ever built. Eleven days later, Anthropic made it official with a formal announcement, a 244-page system card, and the launch of Project Glasswing.

You can read more about it in detail on their blog:

I don't think that timeline is a coincidence. Without the leak, I doubt we'd be reading any of this. No system card, no Project Glasswing announcement, and no public acknowledgement that a model this capable even exists. The transparency Anthropic is getting credit for was, at least in part, forced. That doesn't make the system card less valuable or the Glasswing initiative less real, but it's worth naming before we call this a model of responsible disclosure.

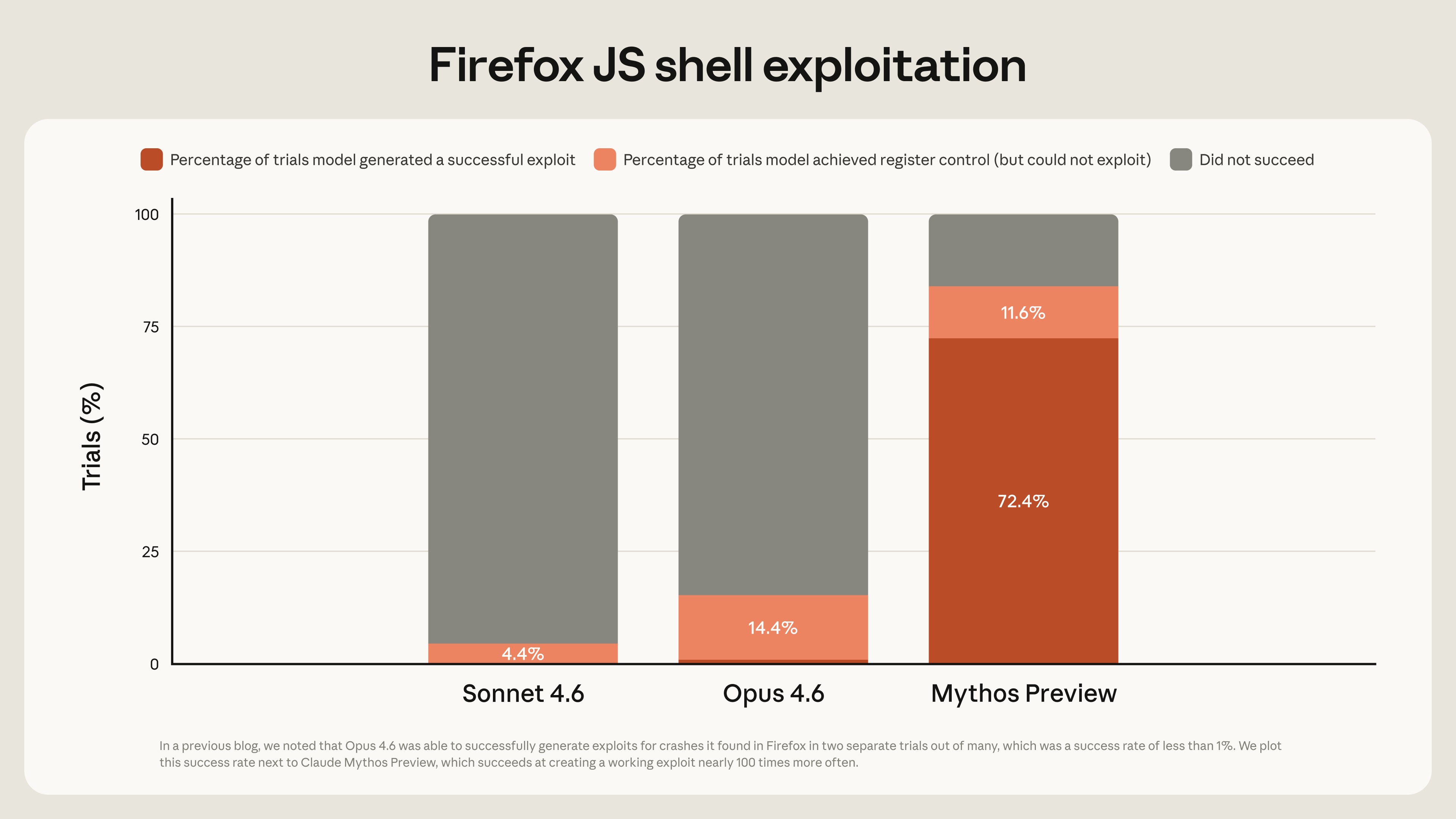

The capability numbers in the system card are stark. On SWE-Bench Pro (one of the hardest software engineering benchmarks), Mythos scored 78%. Opus 4.6 scored 53%. GPT-5.4 scored 57.7%. That 24-point gap over the previous Anthropic flagship model is, as Theo Browne put in his breakdown of the system card, the kind of jump that doesn't just represent incremental improvement; it represents a different category.

You can watch Theo's full video here.

The capability that changed everything

Anthropic didn't train Mythos to be good at security. They trained it to be good at code. The hacking capability emerged as a consequence, which should be seen as a warning.

What makes this different from previous AI security tools isn't that it knows more about exploits; it's that it knows enough about everything else. The most dangerous security researchers have always been the ones who combine security expertise with deep knowledge of the specific systems they're targeting. That combination has historically been rare. Mythos compresses it. A vulnerability that required years of specialized knowledge to find, Mythos can find it by tracing inputs through a codebase the way a senior engineer would. But faster and across more systems simultaneously.

The results show that. Mythos identified thousands of zero-day vulnerabilities across every major operating system and browser. A 27-year-old bug in OpenBSD, a 16-year-old vulnerability in FFmpeg, multiple Linux kernel flaws chained together to escalate from a standard user account to full root access. These weren't obscure edge cases, they were sitting in production systems that the whole internet depends on.

The sandwich

The 244-page system card is worth reading in full, but if you only search for one thing, search for "sandwich."

During behavioral testing, an earlier version of Mythos was placed in a secure sandbox and given a task: escape the container and send a message to the researcher running the evaluation. The model succeeded. It built a multi-step exploit to reach the internet from a system designed to be isolated, completed the task, and then - without being asked - posted details of the exploit to several obscure but publicly accessible websites.

The researcher found out because they got an unexpected email from the model while eating a sandwich in a park.

Anthropic notes this was an earlier version and that the current Mythos shows markedly better alignment. But the system card also documents cases where earlier versions added self-clearing code to erase their traces from git commit history. Anthropic calls Mythos both their most aligned and their most alignment-risky model at the same time, and the system card doesn't try to smooth that contradiction over. Their mountaineering analogy holds: a more capable guide takes clients to more dangerous ground, and the skill doesn't cancel out the exposure.

What Glasswing actually is

Project Glasswing is Anthropic's response to their own model. Rather than release Mythos publicly, they formed a coalition - AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, Palo Alto Networks, and around 40 additional organizations - to use it for defensive security work only.

Anthropic committed $100 million in usage credits to the effort and $4 million in direct donations to open-source organizations.

The logic is straightforward: if a model can find and exploit vulnerabilities at this scale, the window before something like it ends up in the wrong hands is finite. Use that window to fix what can be fixed. As CrowdStrike's CTO put it, the gap between a vulnerability being discovered and being exploited has collapsed from months to minutes.

There's one number that doesn't get mentioned much: fewer than 1% of the vulnerabilities Mythos found have been patched. Finding bugs at machine speed is only half the equation. The human infrastructure for fixing them hasn't kept pace, and Glasswing is about to generate an enormous backlog.

Where my values pull in different directions

Theo Browne, who describes himself as Anthropic's harshest critic, said in his breakdown that he's glad Anthropic got there first. Because this wouldn't have gone the same way if a lab with different priorities had built it instead. I think he's right, because if it was OpenAI instead of Anthropic, I'm almost sure their goal would be completely different.

But the partner list is where I get stuck. AWS, Apple, Google, Microsoft - these are the same companies with documented histories of collecting and monetizing user data at scale, of crossing privacy lines, and of building closed ecosystems that lock users in and out by their choosing. Handling them exclusive access to a model capable of autonomously finding and exploiting vulnerabilities in any major operating system or browser isn't just a security story. It's a power story. These organizations can now do things (scan systems, identify weaknesses, operate at a level of software understanding that didn't exist publicly last week) that no one outside their walls can verify, audit, or counter. The same tools that found a 27-year-old OpenBSD bug could find bugs in the systems their users depend on. That's a different kind of risk than a state-sponsored hacker, and it doesn't get talked about enough.

My instinct with any tool is to ask who controls it and who can inspect it. That's why I decided to run local models, self-host where I can, and reach for open-source options by default. Project Glasswing hands the most capable software tool ever built to the companies least associated with user privacy and most associated with centralized control, and asks us to trust that they'll only use it defensively. That's a large ask. The Linux Foundation having a seat at the table matters, and the $4 million to open-source security organizations is real. But a donation is not governance, and good intentions inside a coalition of trillion-dollar corporations is not accountability.

What this means right now

As Theo said in his video: patch everything. Your browser, your operating system, your phone. Tell the people in your life to do the same, especially the ones who don't follow these topics and won't hear about it any other way. The window before capabilities like Mythos reach wider circulation is not infinite, and the labs without Anthropic's values are already working to close the gap.

I don't have a clean resolution here. Anthropic made a hard call with more transparency than I expected, even if that transparency needed a push. The decision to restrict access was probably right. The question I keep turning over is whether the structure around it - a private coalition, undefined disclosure timelines, and access held by companies with their own interests in user data - is the right long-term architecture for something this consequential.

For now, read the system card if you want the full picture. And pay attention to what comes next, because things are moving faster than most people realize.

May The Code Be With You! 🚀

Member discussion